The recent trend toward the use of big data in our everyday lives has become its own narrative in the news. Even the White House has weighed in; the Obama administration released a recent report on the use of big data and its implications in education, health, advertising, and the economy, including a section dedicated to the technology’s potential use in employment, particularly in recruitment.

Yes, it’s true that large employers are turning toward computer algorithms to determine who is and is not a good fit for the job. Although the results consistently suggest that these “robot recruiters” are effective at picking employees that stay longer on the job and perform better, there is still some skepticism as to whether computers can replace human judgment when it comes to evaluating talent—and its potential to discriminate.

What’s important to recognize is that the current system isn’t perfect; recruiters aren’t unbiased. In fact, a long line of research documents empirically the existence of a “like me” bias that leads recruiters to hire applicants like themselves. This may benefit job applicants who happened to have gone to the same school as the interviewer, but unfortunately, it tends to hurt anyone who didn’t. The inevitable outcome of this bias is that the most talented or skillful individual does not automatically get selected for a job, but rather the applicant the recruiter likes the most. Not only that, but there’s the possibility that hiring like-minded individuals tends to reduce diversity in the workplace.

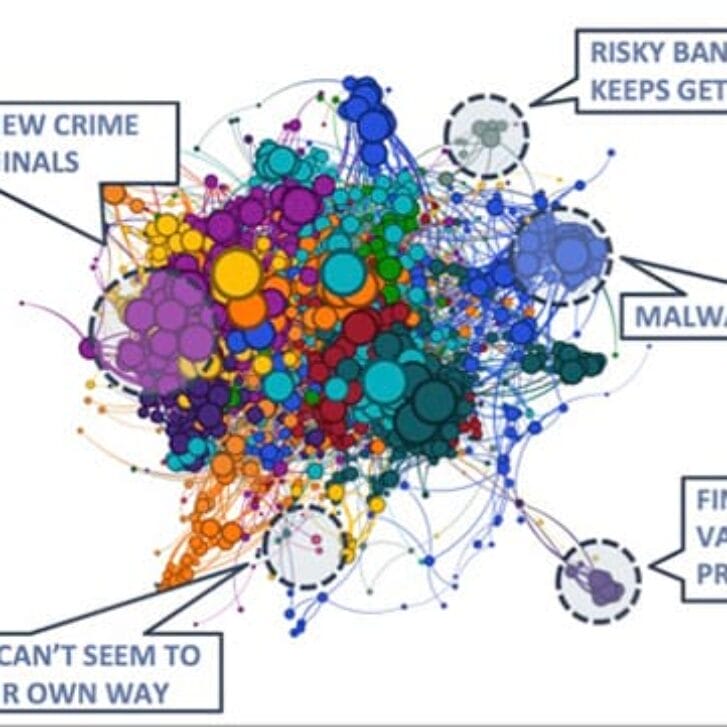

Contrast this with the algorithms that have been built to select the best applicants. These algorithms are designed to make assessment decisions based on the factors that actually matter and have been correlated statistically with on-the-job performance and outcomes. They’re also engineered to ensure that they have no “adverse impact” on groups protected by gender, race and age. In essence, algorithms have been trained to select the most talented applicants and to ignore the fact that he or she went to Harvard and plays squash.

The data support this claim. In fact, a white paper will be released shortly by researchers at the University of Toronto, Yale and Northwestern that analyzes hundreds of thousands of hires and finds that the adoption of job testing is associated with a 20 percent reduction in quitting behavior.

If anything, it’s more likely that online assessments reduce bias in the hiring process. Consider the fact that recruiters typically spend approximately seven seconds screening each resume. What do they look for? Among other things, they look for previous work history and job-relevant experience. San Francisco technology company Evolv, where I serve as chief analytics officer, has released studies demonstrating conclusively that job hoppers and the long-term unemployed stay just as long and perform just as well as individuals with a more typical work history. These are factors that shouldn’t play a role in the screening process, yet 2 to 6 percent of all job applicants are dismissed immediately because of a less-than-traditional work history. Prehire screening reduces personal biases by allowing job hoppers and long-term unemployed to be considered on the basis of their true knowledge, skills and abilities.

The fact that computers are playing a bigger role in the hiring process causes some trepidation, but it’s important to realize that these algorithms aren’t meant to replace recruiters. They’re simply intended to arm recruiters with more information, which they can use to make a more informed decision. It’s an exciting era, not only because the technology is capable of issuing recommendations around something as complicated as hiring, but also because this capability is going to give a fair shot to millions of job applicants who wouldn’t have been considered previously.