(Photo: Getty Images)

It’s been seven weeks since the Cambridge Analytica scandal rocked Facebook. Here’s a short history: Facebook shares plunged 18 percent in 10 days, dragging down the NASDAQ index 10 percent as well. Mark Zuckerberg’s mea culpa in Congress played to decent reviews. Now Facebook and the NASDAQ have stabilized, and the trade war with China seems a bigger threat to end the bull market.

So was the Facebook wobble a blip or a sea change?

Blip—in the sense that the chances of another 2001 dotcom bubble bursting are small because today tech companies, and their products, are so much more real (read: not only revenue, but also profit generating) and there is no sign of a flattening of the innovation S-curve in Silicon Valley.

Potential sea change—in the sense that Facebook’s Cambridge Analytica problem was only the most visible example of a much broader and deeper phenomenon.

The core business model of many tech firms is monetizing the data they collect from users—not only for themselves but also for selling to others. Not everyone is a privacy hawk, and millennials less so than earlier generations. And of course, we all click the mind-numbing agreements asking us to “agree” to and “accept” how tech firms tell us they may use the data we give them voluntarily.

But there is a powerful political dynamic right below the surface: The more visible and widely understood the tech business model of monetizing user-generated data becomes, the more people will be upset about it—and the more likely the government will try to respond through some sort of regulation (even if the regulation is misguided, ineffective, or both).

The European Union rarely predicts America’s future. One exception may be the public pushback against tech, with lots of Washington attention focused on the EU’s new General Data Protection Regulation, following years of large-scale fines against big American tech firms for violating European rules and norms where data, privacy, and monopolies are concerned.

I think the monopoly point might be the most important because it multiplies (geometrically) worries about data privacy. Facebook and Google are so big that there is a plausible prima facie argument that they can no longer be regulated by market forces; the government will have to step in. Given that their core business model is monetizing information on the behavior of users, the concern is about 21st century consumer protection on an unprecedented scale. Just think about the Obama administration’s consumer protection clampdown on banks after the housing crisis last decade.

Consumer protection is different from regulating tech firms like media companies (the other oft-quoted “existential risk” to tech). It is not so much about the truthfulness and community standards of content as it is about firms preying on customers.

So perhaps the parallels between the railroads and banks of early 20th century America and the tech firms today are not so far-fetched. The legacy of Rockefeller and Carnegie is all about philanthropy. The fact that their early giving was about trying to curry public favor at a time when Washington was trying to trust bust them should not be forgotten.

Moreover, Facebook’s sudden embrace of privacy concerns is the tried and true business move: Go with self-regulation because it’s much better than having the government step in.

So, what happens next? I think three things are likely:

1. Big advantage goes to tech companies that can demonstrate a history of taking data and privacy concerns more seriously, and/or whose business doesn’t rely heavily on monetizing user data. Apple, Amazon, and Microsoft clearly believe this is a big opportunity for them to differentiate themselves from Facebook and Google.

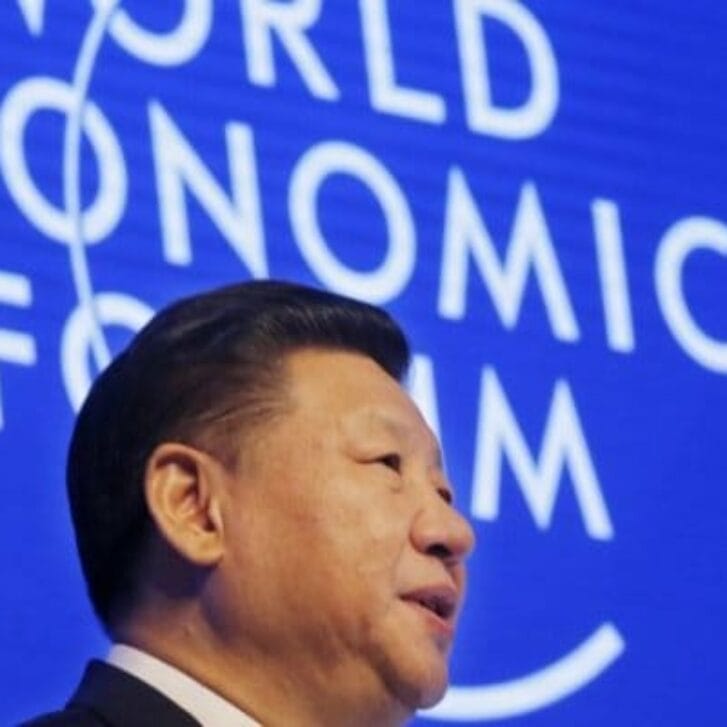

2. Big advantage to Alibaba and Tencent, because—paradoxically—data privacy worries have much less public resonance in China than in the U.S., at least in part because of broader concerns about the Chinese government’s ability to use technology to monitor more closely the behavior of its citizens. It is also likely that emerging markets too will be less privacy-sensitive than America. So, there’s little reason to expect the global rise of China’s tech titans to slow down anytime soon.

3. Big challenges for other industries that combine high tech with using personal data to drive the business—and hence where consumer protection looms large. Think autonomous vehicles, where every tragic death associated with experimentation—no matter what the underlying cause—creates a wildfire of criticism and conservatism. But also think about health tech, where the FDA is always a massive hurdle for innovators to overcome.

Facebook/Cambridge Analytica swallowed all the media headlines because of its tie-in with Trump/Russia. But in the long term, I suspect its enduring impact will be putting the monetization of personal data in the political spotlight. No doubt tech firms will do all they can to manage this challenge, and I certainly am not willing to bet against them. But it is a big challenge nonetheless. Because we are talking about the core pure-play tech business model.

Editor’s note: This post was originally published on Dean Geoffrey Garrett’s LinkedIn page, where he was named an “influencer” for his insights in the business world. Geoffrey Garrett is Dean, Reliance Professor of Management and Private Enterprise, and Professor of Management at the Wharton School of the University of Pennsylvania. Follow Geoff on Twitter. View the original post here.